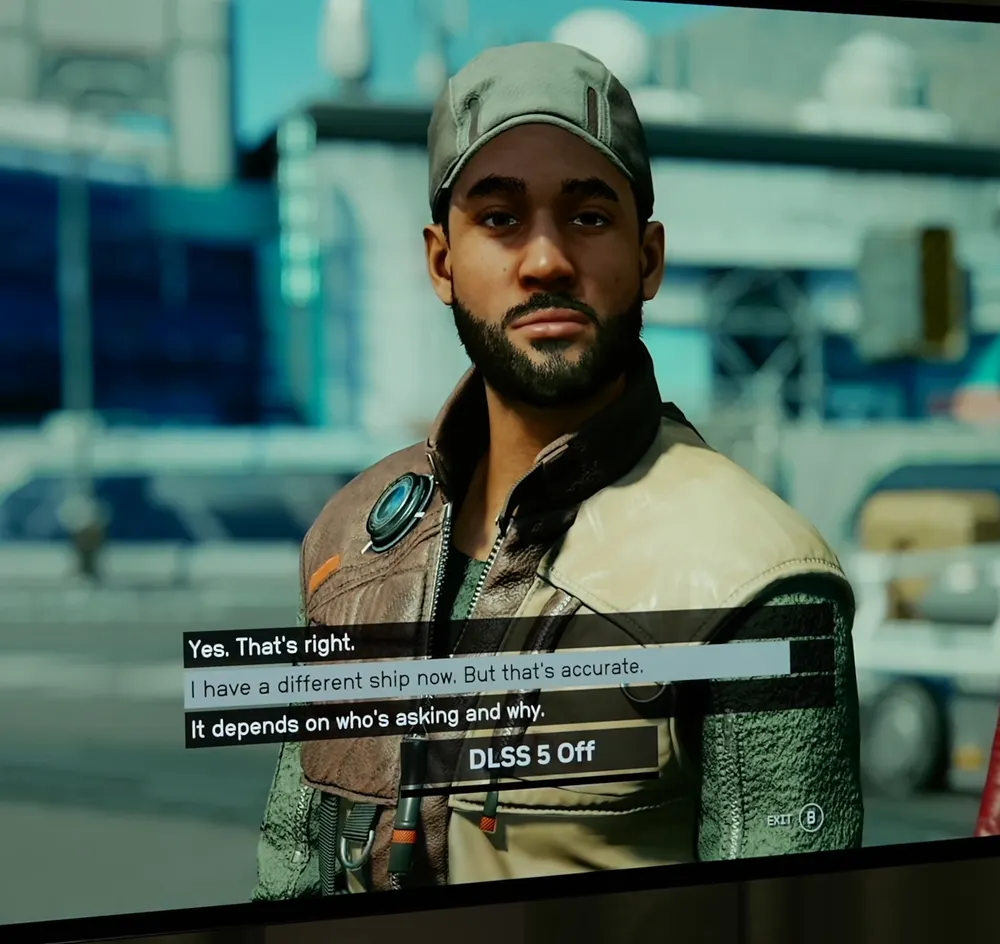

I would say that after seeing Requiem and the Starfield images that the faces look better and more realistic with DLSS 5 but the backgrounds/environments look better with it turned off.

This is blowing up a bit on Bluesky of all places, right now I’m seeing the image of Tommy from Starfield and someone noticed that they Yassifyed his eye lashes too.

The lighting really is looking like Starfield did on launch, it’s all too bright and washed out.

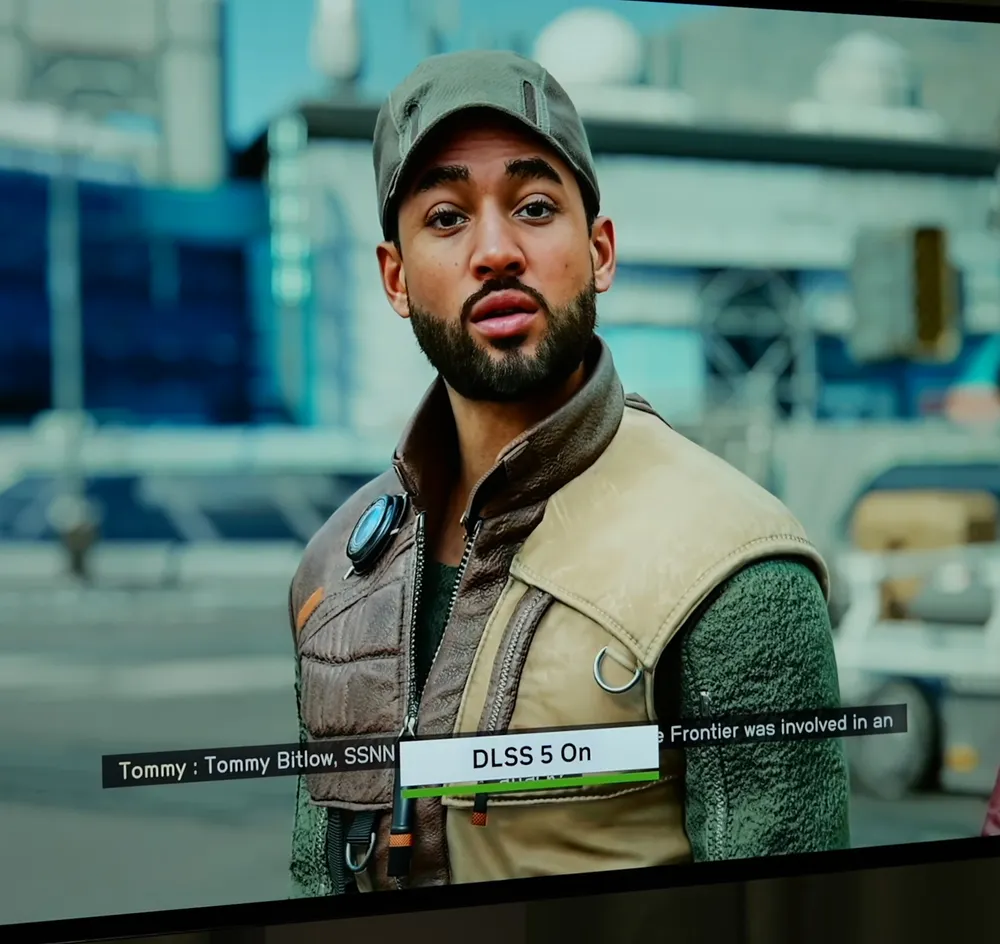

Also this image on a response was something else.

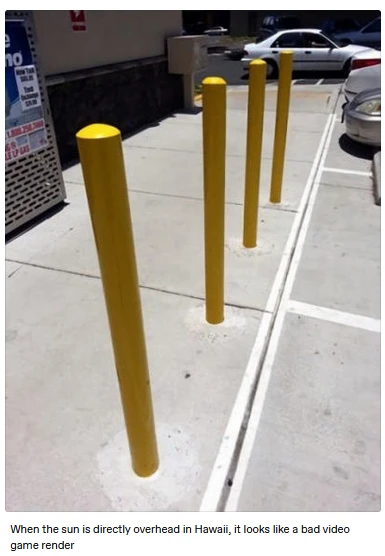

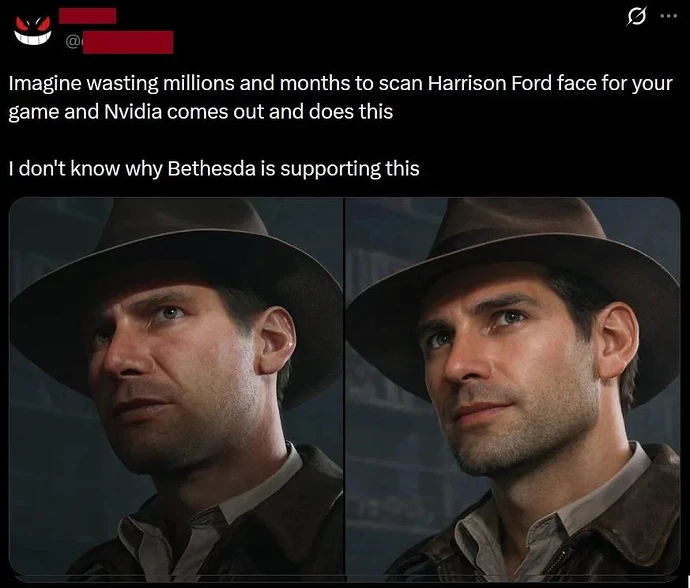

And once again, in the original scene the light is coming from above him, so the hat brim is casting a shadow on his face… In the DLSS5 version he’s got a spotlight on him from directly in front.

The lighting in the scene has no direction anymore at all. There’s no sense of the character model being embedded in the world.

I don’t know what to think. I guess we have passed a threshold and it will be hard to go back with this kind of technology. I can see most people eating it up.

I try to look for artifacts or some of these hallucinations, but I have a hard time pinpointing what looks wrong. It’s just that uncanny valley feeling that tells me it looks fake, but it’s after looking at so much AI shit, like most of us. It looks exactly like these fake remakes people try to make with AI ans posting on twitter.

The lighting is obviously the biggest thing, so we will have to see it in movement. I want to see the characters in a dark room to see how that technology cope with the lack of data. Why the lighting seems to always be perfectly placed ?

I think this technology is missing the point of a natural look. It doesnt look natural at all. DLSS off image looks more real and grounded, even though it looks worse.

It is baffling that this feature is coming from the RTX brand which has been all about accelerating correct lighting & reflections and making it efficient to render at high image quality with DLSS. It is one of the few AI things that people love, why would they add such an unnecessary, artless feature like that

Imo, it doesn’t look better neither the character models or the lighting, as too much of the games charm is lost to get an approximation of how an AI model thinks a real person should look like.

Characters somehow looks more plastic like because of the changes to the model, lighting and added lighting on the models. That all comes together in a way that it’s studio like, instead of natural.

Yeah that’s the bit I’m most confused by - if this is removing the lighting when it’s generating faces, clothes and backgrounds, does it still do ray tracing or any of the RTX features?

Because if they can’t be seen due to the new rendering, what’s the point of having ray tracing (a notoriously heavy GPU tech) at all?

Lord that is actually horrific

It actually kinda sucks that resources are being poured into doing this with the technology. It reminds me of when Google first started infusing AI into its search and we got laughable results. Or the same with Apple and Siri. I can believe this is supposed to get better and it probably will, but do we actually want it? Search might have been a bad example because as the Google search algorithm has been ruined with SEO and ads, AI search combing through the internet could have a genuine function. This is just weird… I genuinely don’t know how one could even benefit from this.

I’m torn a little as I was blown away by the Matrix Awakens UE5 experience, but you could tell much of it was on rails to allow for pre-rendering - and some kind of tech that allows that type of realism in games would be cool, particularly in the hands of the Coalition who helped on Awakens.

I do also love games with simpler / cartoony bright graphics like Powerwash Simulator or Escape Academy, so wouldn’t want realism taking over completely.

This however isn’t quite that anyway - the generic looks in particular while realistic are plain, and in some areas like the Hogsmeade scene it added sod all.

I’m not particularly fussed by the “AI” part of it, it’s done locally on the GPU so not going to eat data centre power, but I would prefer to see it producing output closer to the dev’s rendering than completely re-doing some faces into bland generic “human face”.

I’m intrigued to see how it develops I’ll admit - between UE5 features and tech like this (including whatever AMD cooks up) that eventually matures and becomes part of the dev pipeline like upscaling and ray tracing eventually did, maybe realistic games are a possibility.

But it really should be more about enhancing what’s there whereas at times this seems to actually replace the source material…

There is one area I foresee issues too - violence.

If they train this on real gore, it could be so realistic it gives kids nightmares (imagine skin hanging off and entrails hanging out) so would be unavailable in many games that didn’t have an 18+ rating or it’d lead to media outrage.

But if as is likely they don’t do much with violence, then it could be you shoot this super realistic enemy and the usual fake looking blood splats out and a mannequin-looking body falls to the ground looking nothing like the enemy - which would very obviously pull you out of the illusion

Search is still a revelant one because AI was one of the main reasons why they made it even worse in a such a short time.

They want people staying on Google using the AI tool to get their information.

I don’t know if this is real but, yikes dude.

I’ll have to look i go it later though.

Edit: probably made with something else as I don’t see it on the DF video.

Not seeing anything on Nvidia’s press release either. But someone pointed out that Leon gets more chin added in the example thet showed of him.

Careful. It’s a meme by now, so just have to go by actual video. Shame, I was rather enjoying Leon’s one liner meme.

I hope you enjoy Leon’s extended chin too, from the video.

It really isn’t all bad.

Obviously Grace on the street is just weird, but the later part isn’t bad at all. I don’t understand why she looks so low detailed in that part either in vanilla RE9 though. For some reason it really isn’t too bad at all for Leon either, you can see his stubble way better.

And then when you see Oblivion and also Hogwarts with the better lighting …I honestly can’t hate. Locations and lighting do look better. The old lady and student from Hogwarts on the other hand…nah!

So you need 2 (two) 5090 graphics cards for this ?

So yeah, now it’s dead on arrival. I can’t see people wanting that when games already look insane on PC with path tracing.

It feels like they are trying to use AI shit for anything after investing so much in it.

There are a lot of people on the internet talking about engineering that really know nothing about it. Every release is not going to seem amazing but that is part of the iterative process. The next release builds on previous. For example, directx:

Directx8. Add programmable shaders. Language was assembly like. Good idea but hard to use.

Directx9. Replaced the assembly like language with HLSL. Everyone loved it. Even opengl copied the idea.

Directx10. Rearchitected directx. Not a great response from developers.

Directx11. Because of the rearchitected done by directx10, was really able to shine.